Fine-Tuning Gemma for Personality - Part 5: Base Models vs Instruction-Tuned

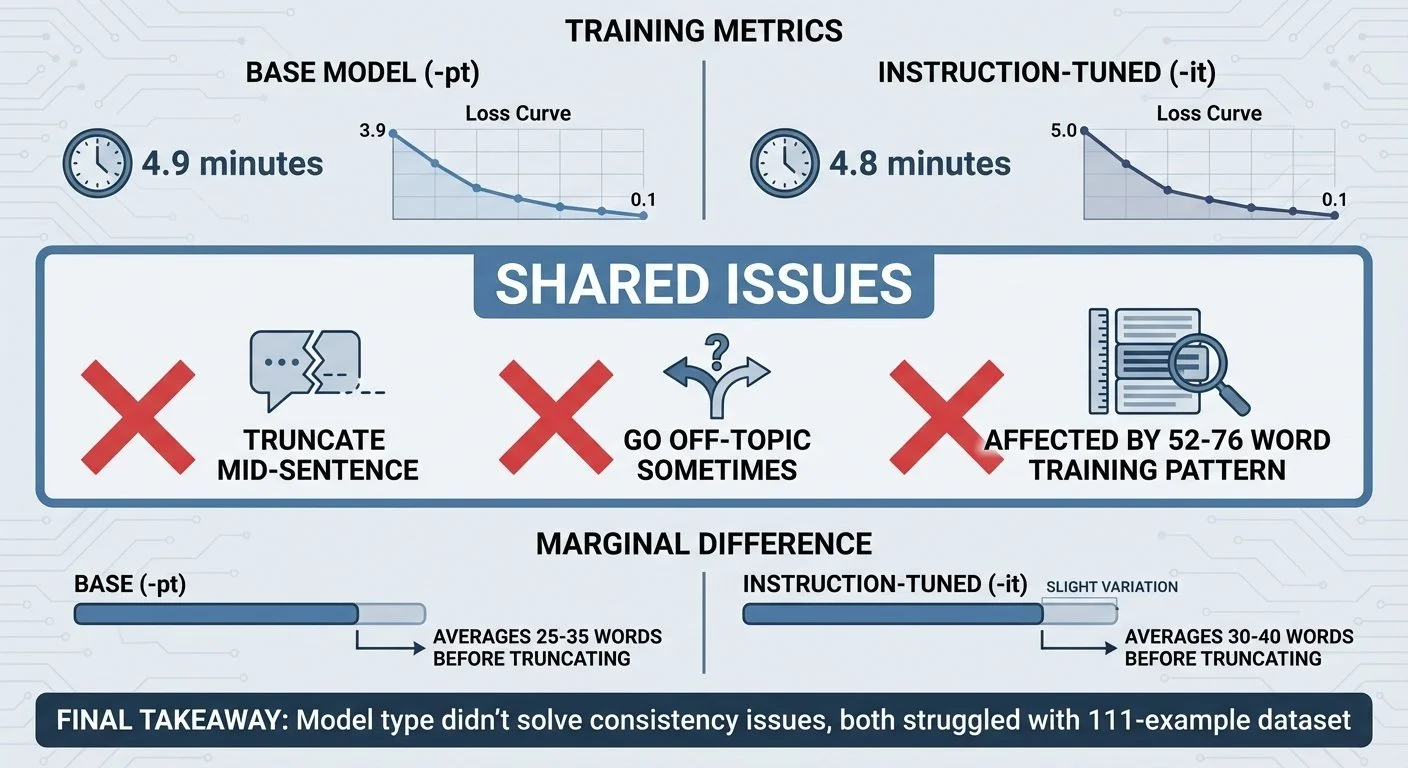

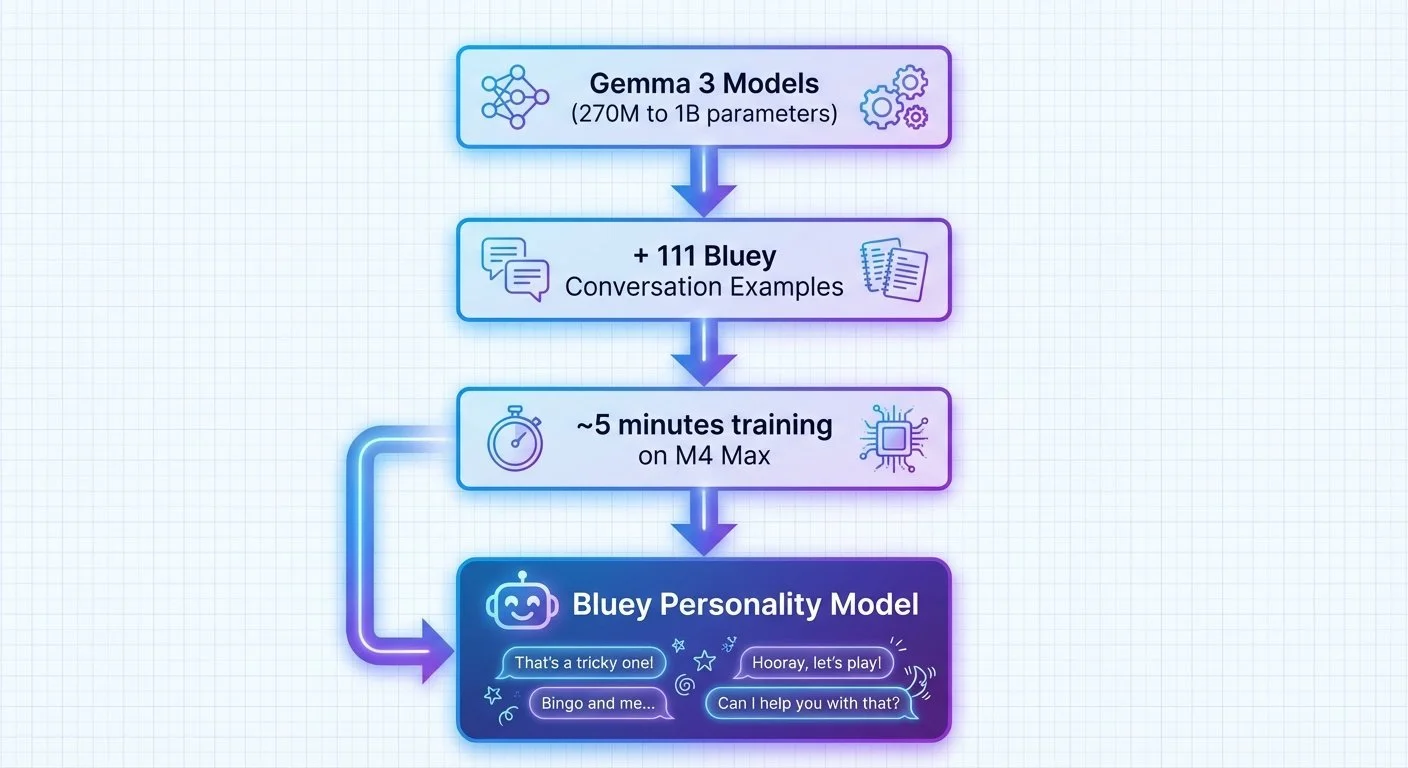

Same training data. Same hardware. Same 5 minutes. I tested both base (-pt) and instruction-tuned (-it) models to see if one would handle personality better. Both struggled with consistency.

Fine-Tuning Gemma for Personality - Part 4: When Your Model Learns Too Well

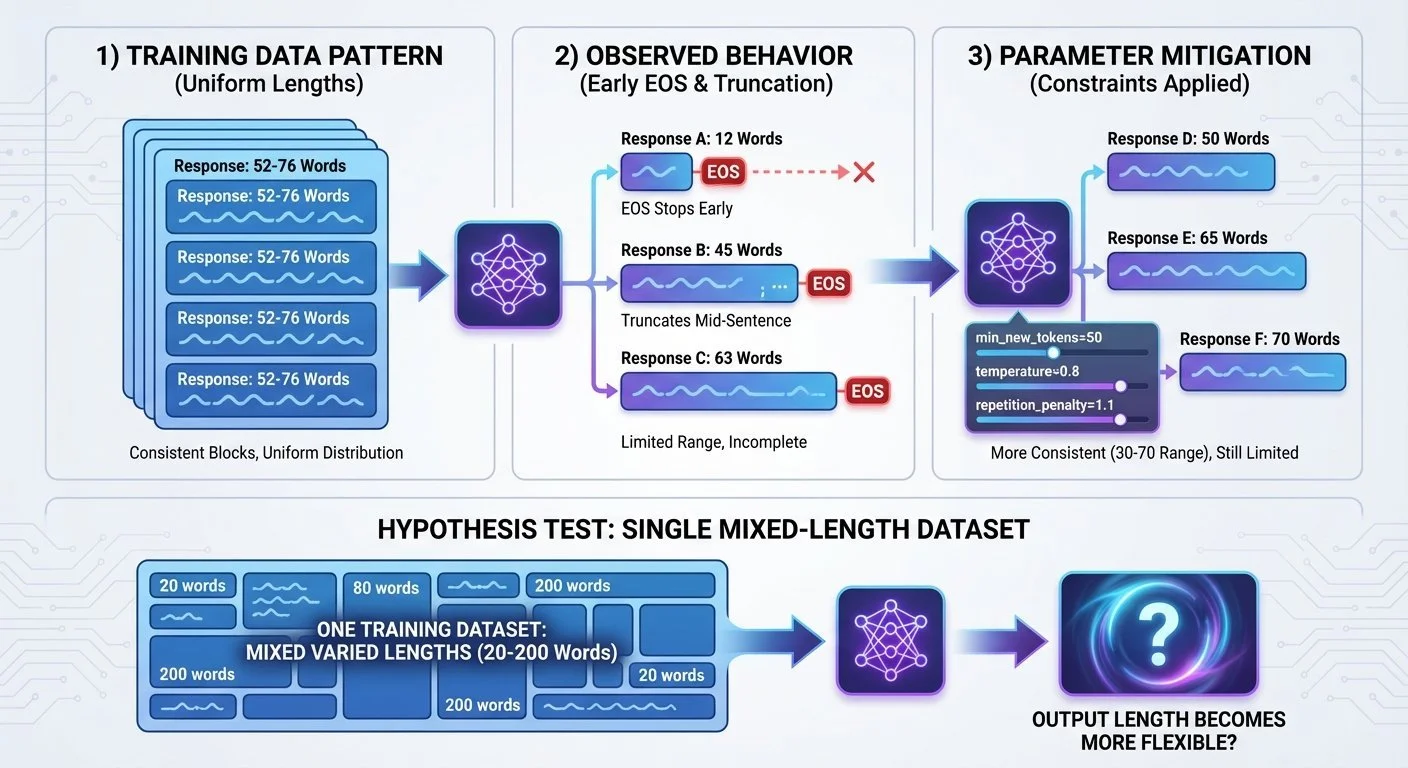

The model reproduced Bluey's speech patterns. Then it stopped generating after 76 words. The training data's average response length may have become a constraint.

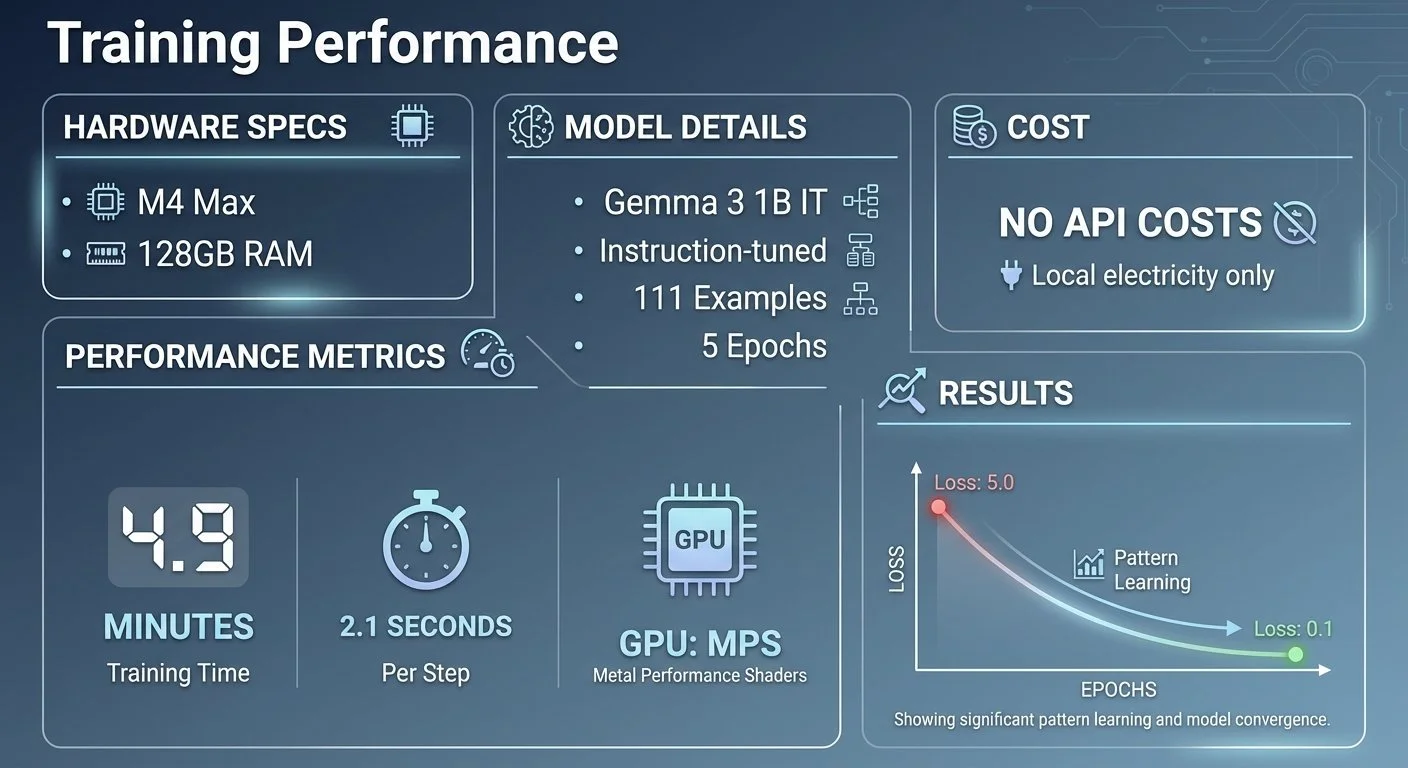

Fine-Tuning Gemma for Personality - Part 3: Training on Apple Silicon

Five minutes and no API costs to fine-tune a 1 billion parameter language model on my laptop. No cloud GPUs, no monthly fees, no time limits. Just Apple Silicon and PyTorch.

Fine-Tuning Gemma for Personality - Part 2: Building the Training Dataset

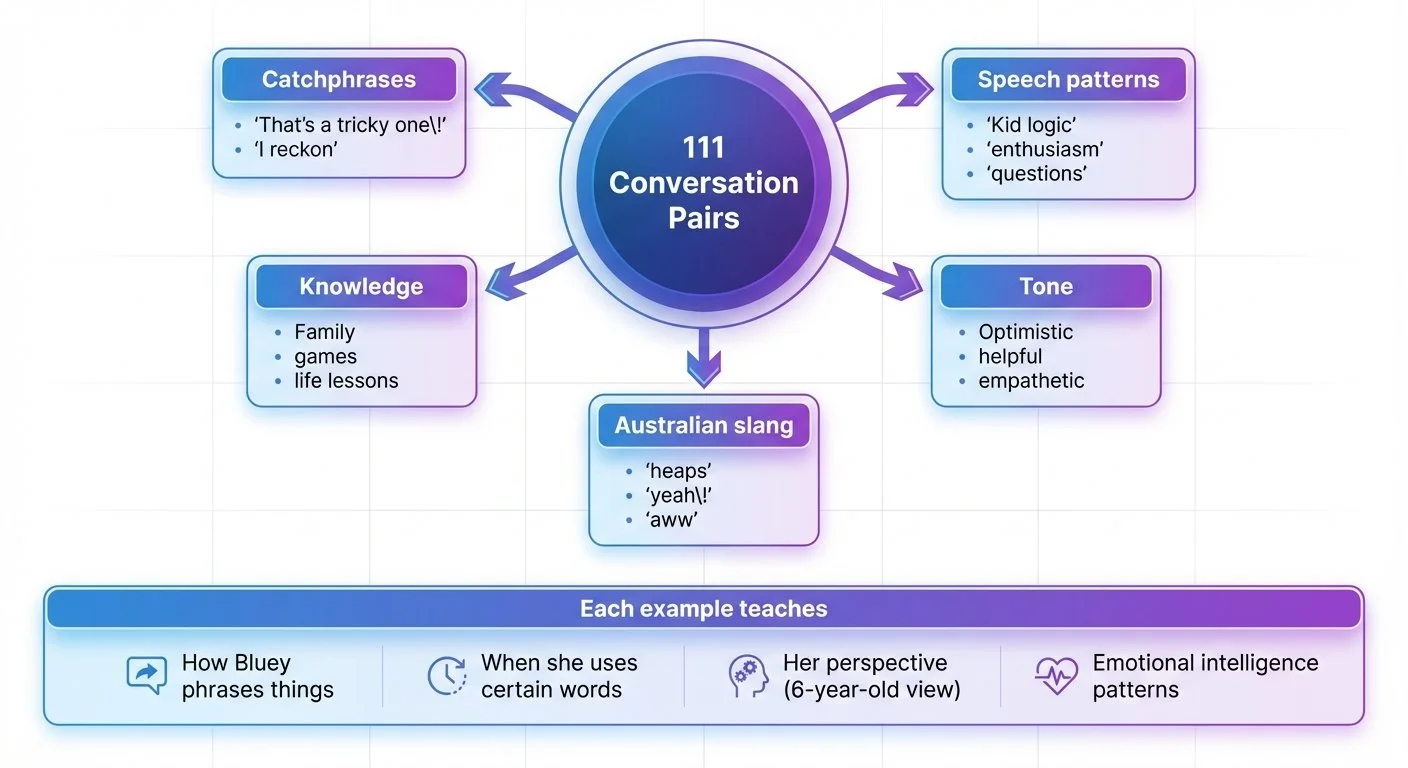

One hundred eleven conversations. That's what it took to demonstrate personality-style learning. Not thousands—just 111 AI-generated examples of how she talks, thinks, and helps.

Fine-Tuning Gemma for Personality - Part 1: Why Fine-Tune a 6-Year-Old?

I taught an AI to talk like Bluey Heeler from the kids' show. Not through prompt engineering or RAG—through fine-tuning a small language model on 111 conversation examples. Five minutes of training on my MacBook. The model learned to mimic her speech patterns.