The Bart Test - Part 6: The American Ninja Warrior Problem

The process validation from [Part 5](/blog/bart-test-part-5-redesigning-from-scratch) worked. Judges completed the paper sheets in about 10 minutes. They engaged with it. I got detailed feedback.

When I sat down to analyze the [completed ratings](https://github.com/bart-mosaicmeshai/bart-test/blob/main/evaluation_sheets/20251228/completed_ratings_20251228.json) from Experiment 04, the patterns were clear. But I realized I was at risk of misinterpreting what they meant.

The Bart Test - Part 5: Redesigning From Scratch

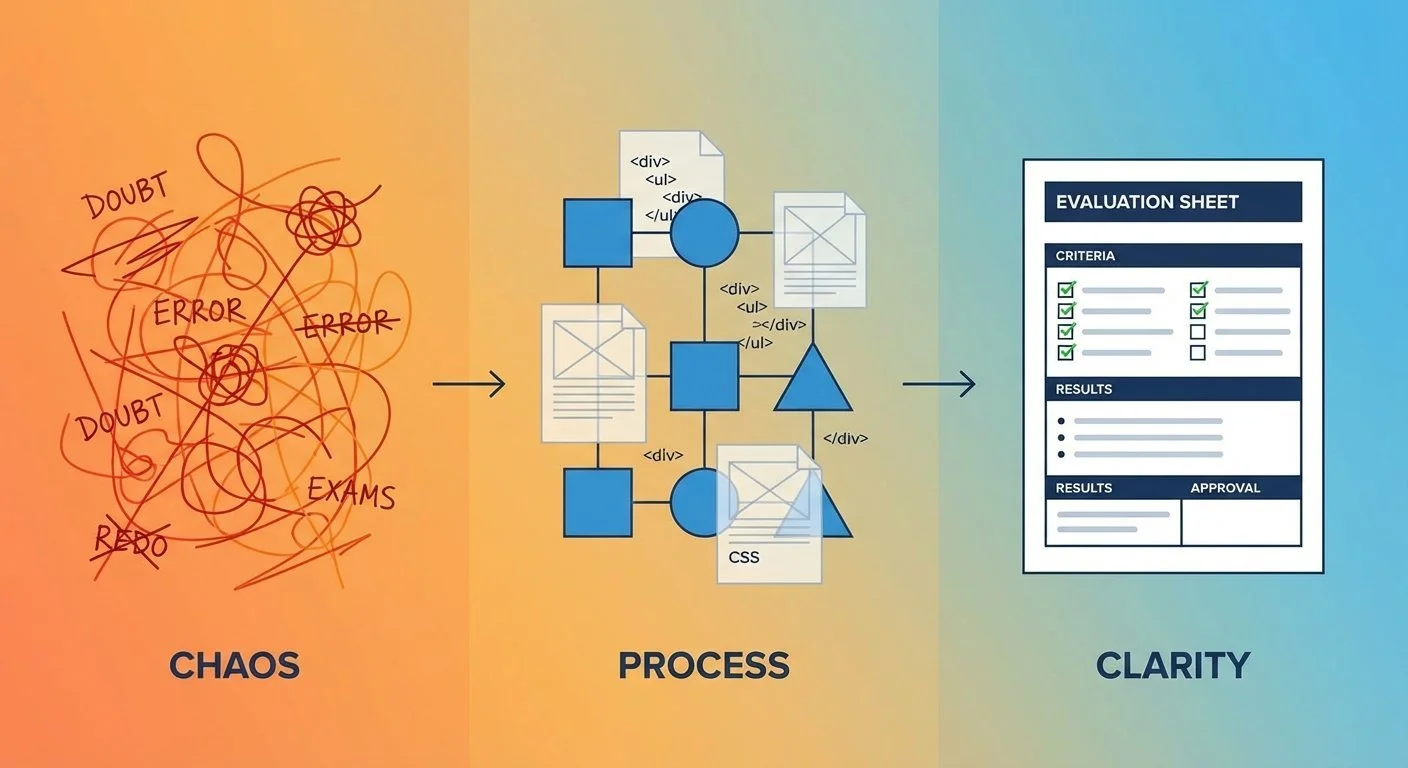

After my teens ghosted the frontier model evaluation, I sat with a choice: give up on this whole thing, or try again.

The doubt was real. Maybe the Bart Test would never work. Maybe asking teenagers to evaluate AI-generated slang was fundamentally flawed. But I couldn't shake the insights from [Part 3](/blog/bart-test-part-3-the-zoo-not-duck-problem)—the "zoo not duck" problem, the slang half-life, the "trying too hard" pattern. Those felt real.

So I decided to try again. Not because I was confident it would work, but because I wasn't ready to give up.

The Bart Test - Part 4: When My Teen Judges Ghosted Me

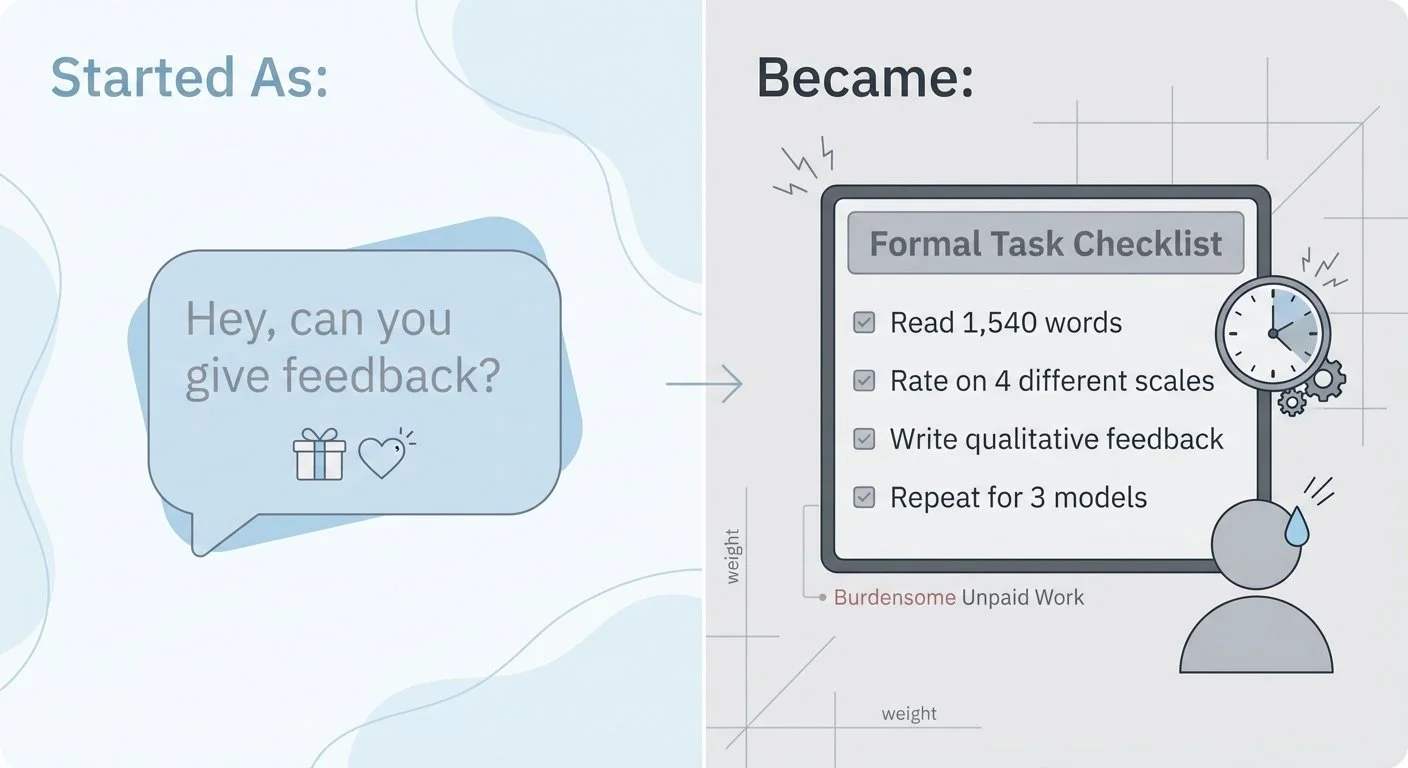

I tested GPT-5.2, Claude Opus 4.5, and Gemini 3 Pro with the baseline prompt. The [outputs](https://github.com/bart-mosaicmeshai/bart-test/tree/main/results/03_experiment_runs) were ready. I sent the first story ([GPT's 1,540-word epic](https://github.com/bart-mosaicmeshai/bart-test/blob/main/results/03_experiment_runs/03a_gpt5.2_baseline_20251218_202909.json)) to my kids via text.

No response.

I waited a few days. Still nothing.

A week passed. They weren't being difficult. They just... didn't respond.